Every signal. Explained.

Live auto-refreshes every 15s. Plain-English explanations. Suggested fixes for every failure.

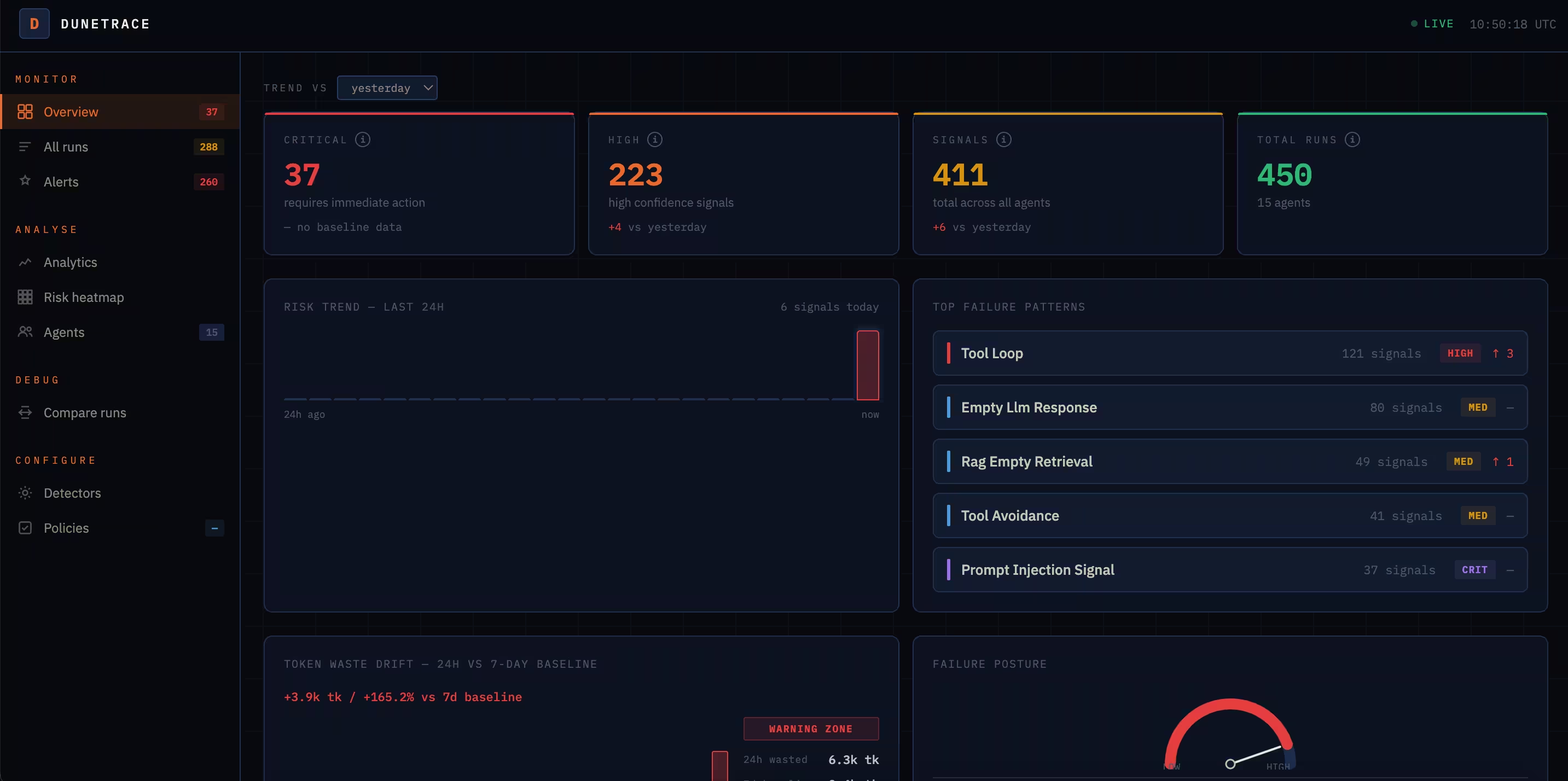

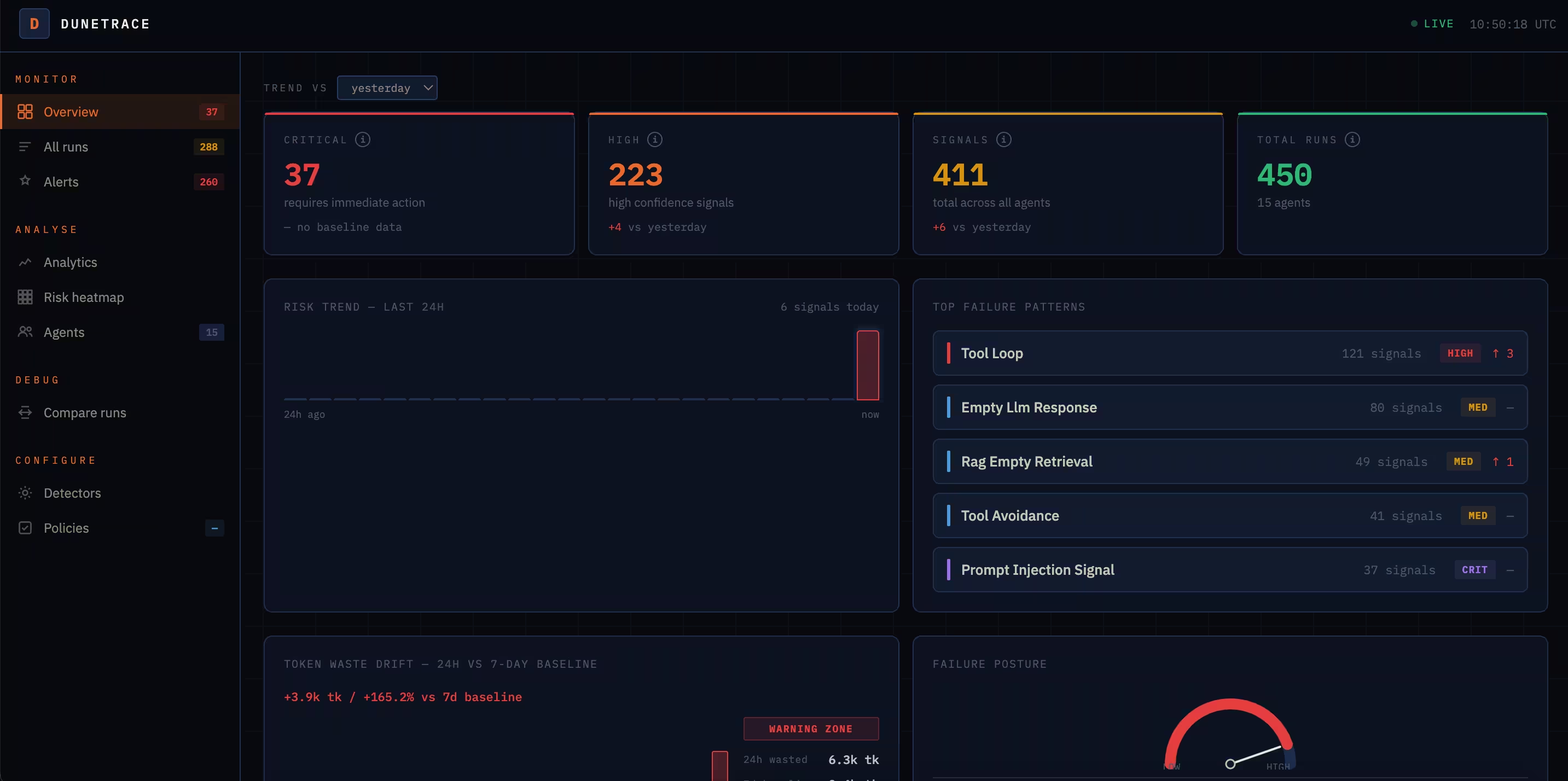

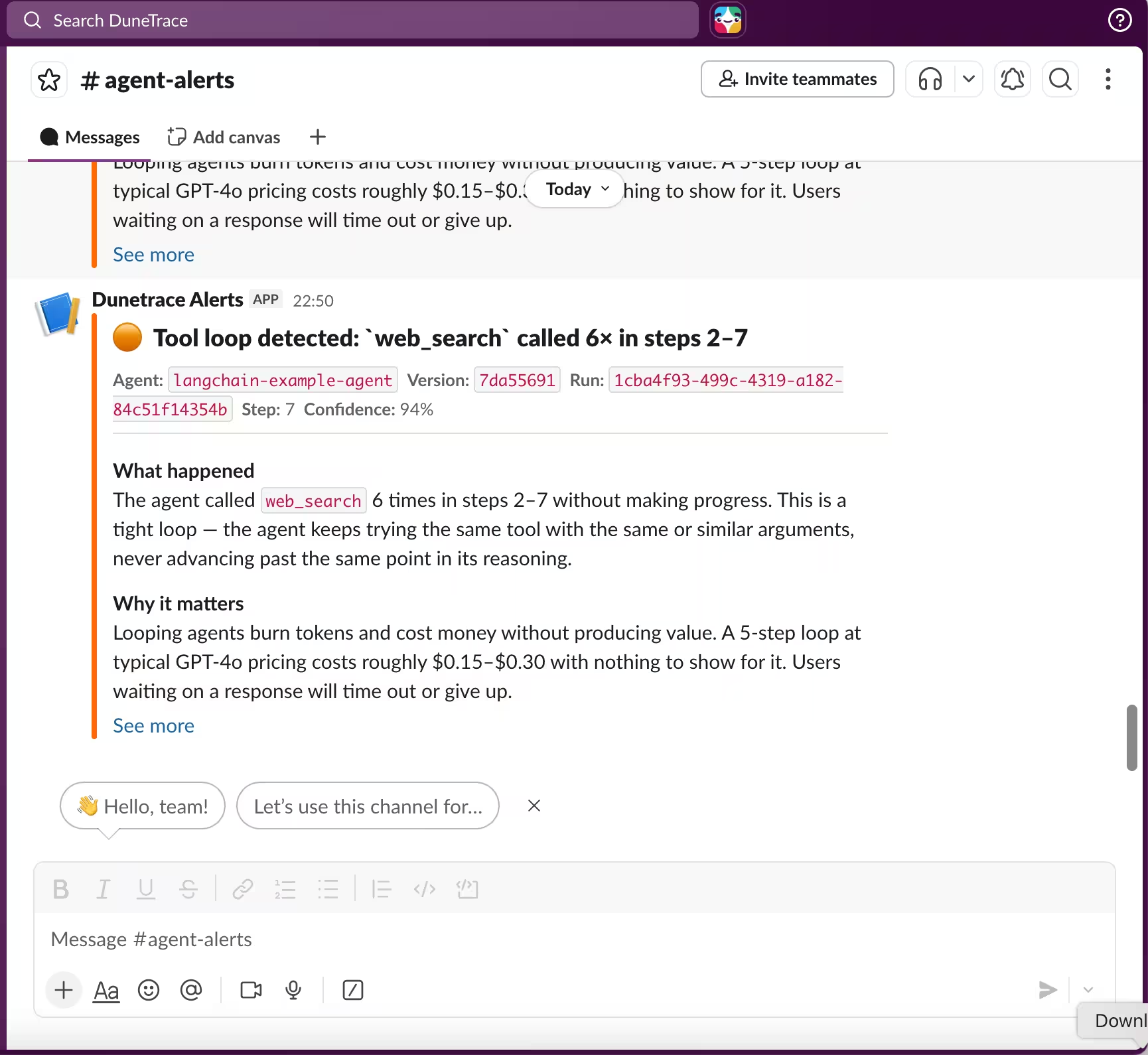

Traditional monitoring can't see inside agent runs. Dunetrace watches every structural pattern and fires a Slack alert within 15 seconds of completion.

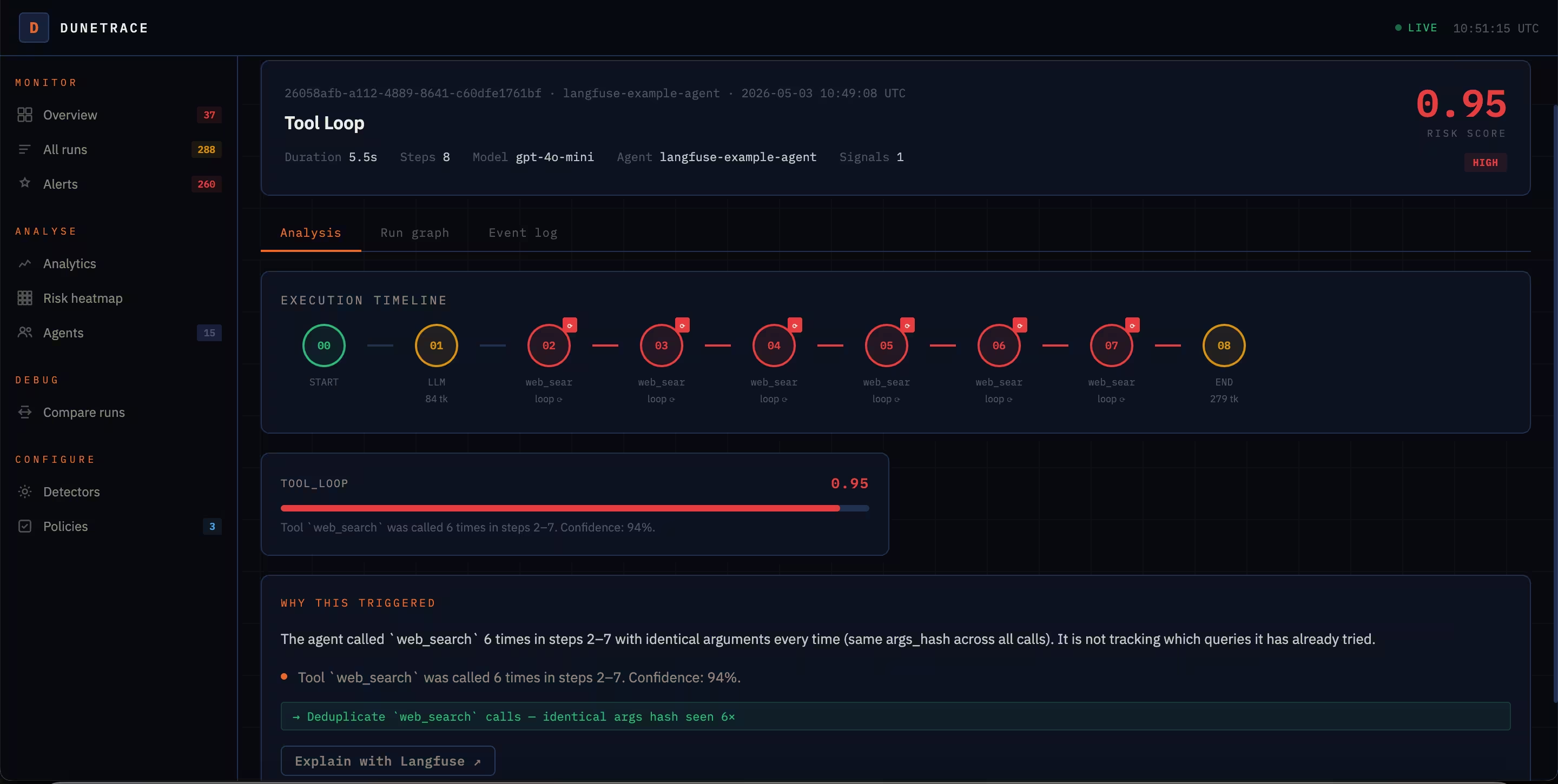

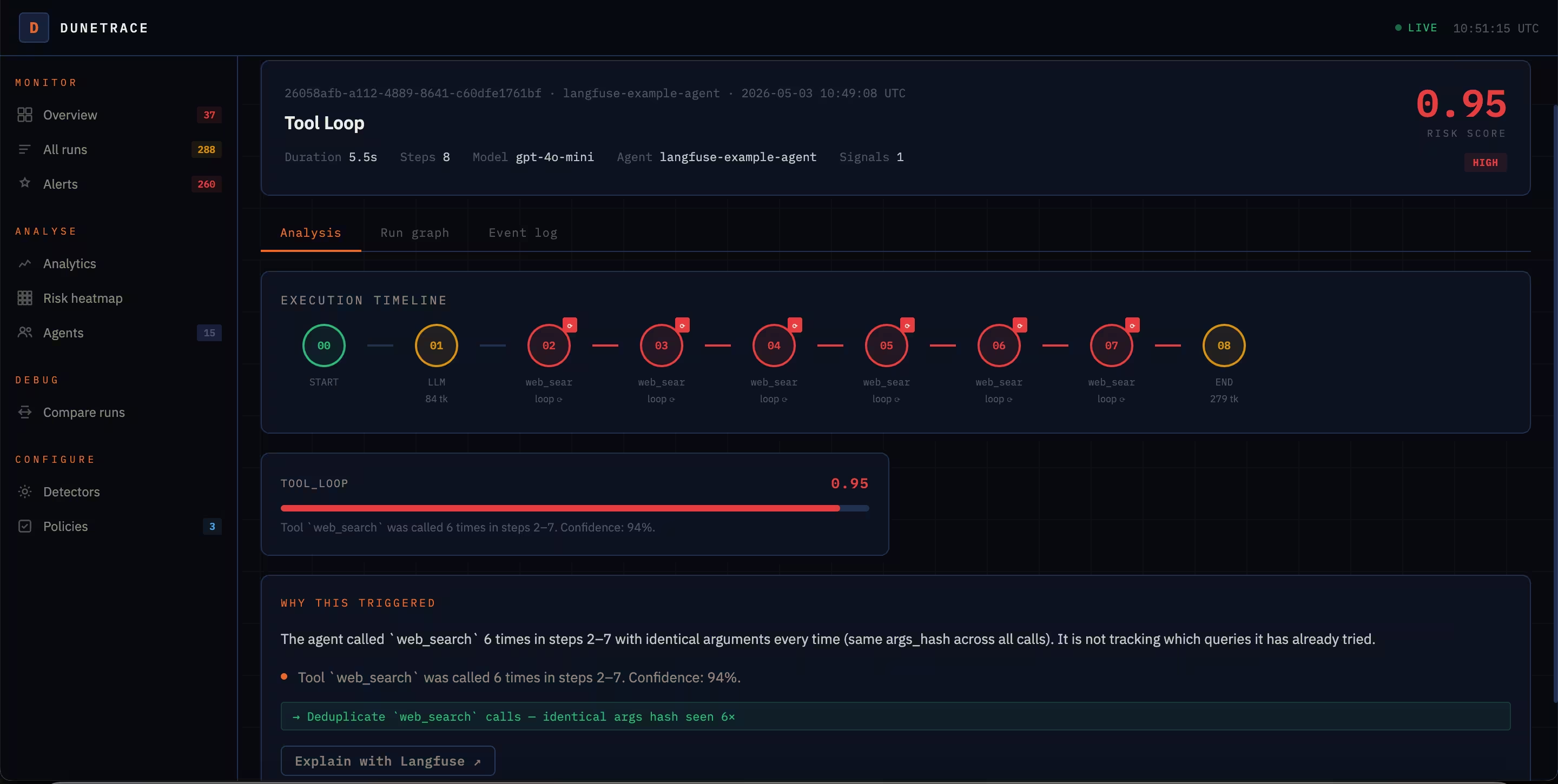

API returns 200. No exceptions. But the agent called the same tool 12 times, burned through your token budget, and delivered a wrong answer, or no answer at all.

LangSmith and Langfuse answer "what happened?" after you already know something broke. Dunetrace answers a different question: is something breaking right now?

Two lines of Python. The SDK patches OpenAI, Anthropic, httpx, and requests globally. No code changes per agent.

15 structural detectors run on every completed run. Events are SHA-256 hashed. No raw content ever leaves your process.

Slack or webhook. Plain-English explanation. Suggested fix. Rate context: first occurrence or systemic issue.

Every detector runs automatically on every completed run. All thresholds configurable via detectors.yml — no code changes.

All detectors run automatically. Shadow mode lets you validate custom detectors against real traffic before they page anyone.

Live auto-refreshes every 15s. Plain-English explanations. Suggested fixes for every failure.

Traditional monitoring never tells you. You find out when a user complains, then spend hours hunting through logs. Dunetrace fires a Slack alert within 15 seconds of a completed run, with a plain-English explanation and a suggested fix already attached.

Every prompt, tool argument, and model output is SHA-256 hashed before transmission. Dunetrace detects structural patterns, not content. Your data never leaves your infrastructure.

Drop-in support for every major Python agent framework and observability tool.

Dunetrace works alongside Langfuse, not instead of it. Langfuse tells you what happened in a run. Dunetrace tells you what went structurally wrong and fires an alert before you ever open a trace. Use both: get the alert from Dunetrace, then drill into the full trace in Langfuse for root cause analysis.

Read the integration docs ↗Instrument your agent in 5 minutes. Get your first alert before your next user does.